Big Data Applications (batch, stream, hybrid)

Copyright (c) 2013-2021 S. Varrette and UL HPC Team <hpc-team@uni.lu>

The objective of this tutorial is to demonstrate how to build and run on top of the UL HPC platform a couple of reference analytics engine for large-scale Big Data processing, i.e. Hadoop, Flink or Apache Spark.

Pre-requisites

Ensure you are able to connect to the UL HPC clusters.

In particular, recall that the module command is not available on the access frontends. For all tests and compilation, you MUST work on a computing node

(laptop)$ ssh aion-cluster # or iris-cluster

Now you'll need to pull the latest changes in your working copy of the ULHPC/tutorials you should have cloned in ~/git/github.com/ULHPC/tutorials (see "preliminaries" tutorial)

(access)$ cd ~/git/github.com/ULHPC/tutorials

(access)$ git pull

Now configure a dedicated directory ~/tutorials/bigdata for this session

# return to your home

(access)$> mkdir -p ~/tutorials/bigdata

(access)$> cd ~/tutorials/bigdata

# create a symbolic link to the reference material

(access)$> ln -s ~/git/github.com/ULHPC/tutorials/bigdata ref.d

# Prepare a couple of symbolic links that will be useful for the training

(access)$> ln -s ref.d/scripts . # Don't forget trailing '.' means 'here'

(access)$> ln -s ref.d/settings . # idem

(access)$> ln -s ref.d/src . # idem

Advanced users (eventually yet strongly recommended), create a Tmux session (see Tmux cheat sheet and tutorial) or GNU Screen session you can recover later. See also "Getting Started" tutorial .

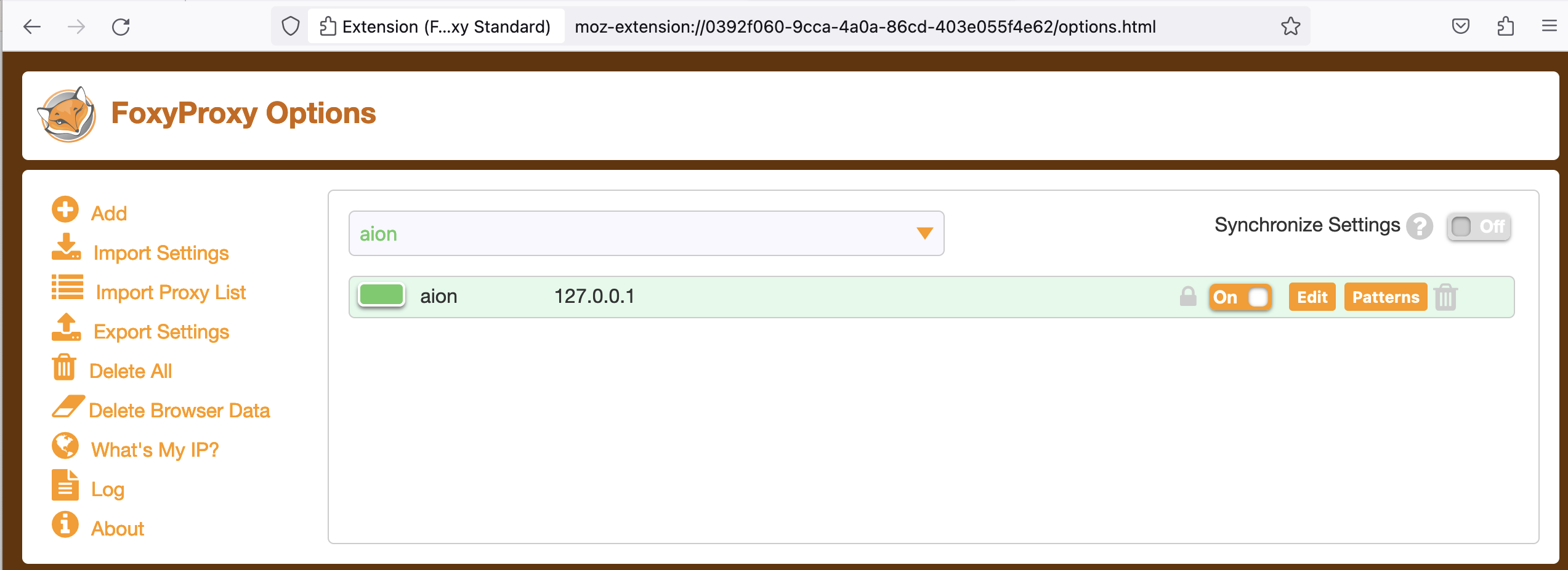

SOCKS 5 Proxy plugin (optional but VERY useful)

Many Big Data Analytics framework (including for the Jupyter Notenooks, the Dask dashboard etc.) involves a web interface (at the level of the master and/or the workers) you probably want to access in a relative transparent way.

Relying on SSH tunnels forwarding is of course one way opf proceeding, yet that's not the most convenient. A more user-friendly approach consists in rely on a SOCKS proxy, which is basically an SSH tunnel in which specific applications forward their traffic down the tunnel to the server, and then on the server end, the proxy forwards the traffic out to the general Internet. Unlike a VPN, a SOCKS proxy has to be configured on an app by app basis on the client machine, but can be set up without any specialty client agents.

These steps were also described in the Preliminaries tutorial.

Setting Up the Tunnel

To initiate such a SOCKS proxy using SSH (listening on localhost:1080 for instance), you simply need to use the -D 1080 command line option when connecting to the cluster:

(laptop)$> ssh -D 1080 -C iris-cluster

-D: Tells SSH that we want a SOCKS tunnel on the specified port number (you can choose a number between 1025-65536)-C: Compresses the data before sending it

Configuring Firefox to Use the Tunnel: see Preliminaries tutorial

We will see later on (in the section dedicated to Spark) how to effectively use this configuration.

Getting Started with Hadoop

Hadoop (2.10.0) is provided to you as a module:

module av Hadoop

module load tools/Hadoop

When doing that, the Hadoop distribution is installed in $EBROOTHADOOP (this is set by Easybuild for any loaded software.)

The below instructions are based on the official tutorial.

Hadoop in Single mode

By default, Hadoop is configured to run in a non-distributed mode, as a single Java process. This is useful for debugging.

Let's test it

mkdir -p runs/hadoop/single/input

cd runs/hadoop/single

# Prepare input data

mkdir input

cp ${EBROOTHADOOP}/etc/hadoop/*.xml input

# Map-reduce grep <pattern> -- result is produced in output/

hadoop jar ${EBROOTHADOOP}/share/hadoop/mapreduce/hadoop-mapreduce-examples-2.10.0.jar grep input output 'dfs[a-z.]+'

[...]

File System Counters

FILE: Number of bytes read=1292102

FILE: Number of bytes written=3190426

FILE: Number of read operations=0

FILE: Number of large read operations=0

FILE: Number of write operations=0

Map-Reduce Framework

Map input records=1

Map output records=1

Map output bytes=17

Map output materialized bytes=25

Input split bytes=168

Combine input records=0

Combine output records=0

Reduce input groups=1

Reduce shuffle bytes=25

Reduce input records=1

Reduce output records=1

Spilled Records=2

Shuffled Maps =1

Failed Shuffles=0

Merged Map outputs=1

GC time elapsed (ms)=5

Total committed heap usage (bytes)=1019740160

Shuffle Errors

BAD_ID=0

CONNECTION=0

IO_ERROR=0

WRONG_LENGTH=0

WRONG_MAP=0

WRONG_REDUCE=0

File Input Format Counters

Bytes Read=123

File Output Format Counters

Bytes Written=23

# Check the results on the local filesystem

$> cat output/*

1 dfsadmin

You can also view the output files on the distributed filesystem:

hdfs dfs -cat output/*

Pseudo-Distributed Operation

Hadoop can also be run on a single-node in a pseudo-distributed mode where each Hadoop daemon runs in a separate Java process. Follow the official tutorial to ensure you are running in Single Node Cluster

Once this is done, follow the official Wordcount instructions

# Interactive job on 2 nodes:

si -N 2 --ntasks-per-node 1 -c 16 -t 2:00:00

```

```bash

cd ~/tutorials/bigdata

# Pseudo-Distributed operation

mkdir -p run/shared/hadoop

Full cluster setup

Follow the official instructions of the Cluster Setup.

Once this is done, Repeat the execution of the official Wordcount example.

Apache Flink

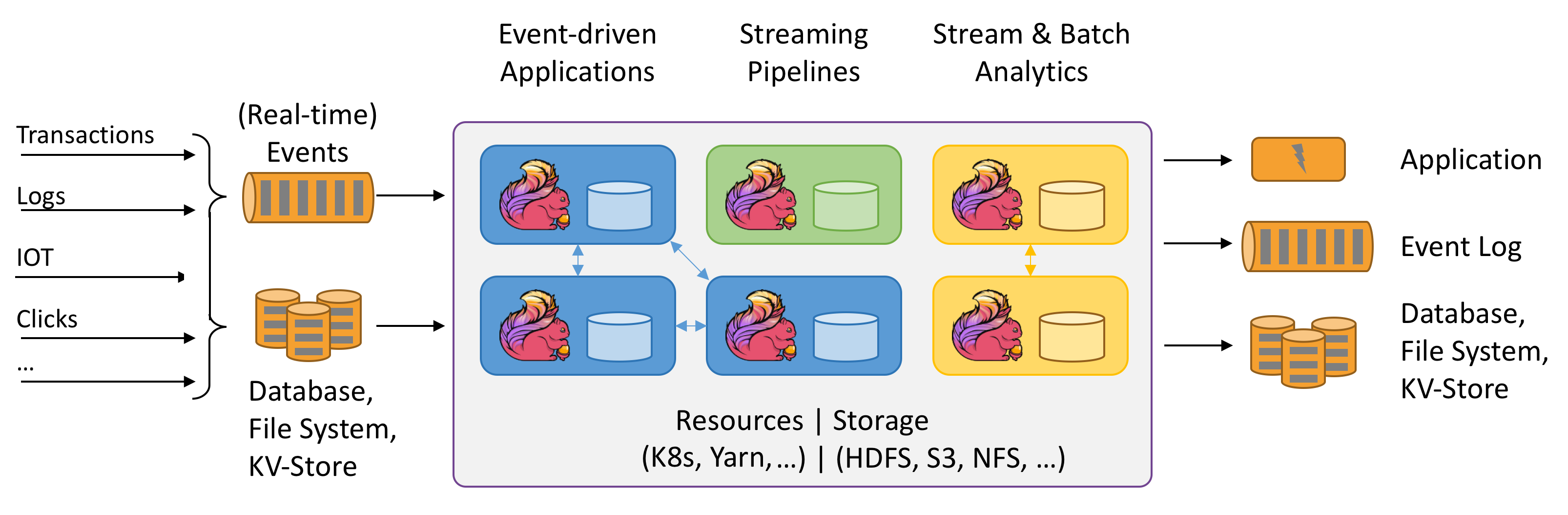

Apache Flink is a framework and distributed processing engine for stateful computations over unbounded and bounded data streams. Flink has been designed to run in all common cluster environments, perform computations at in-memory speed and at any scale.

Flink is available as a module:

$ module load devel/Flink

Follow the official Flink Hands-on training

It should be fine in standalone mode, yet to run Flink in a fully distributed fashion on top of a static (but possibly heterogeneous) cluster requires more efforts.

For instance, you won't be able to start directly the start-cluster.sh script as the log settings (among other) need to be defined and inherit from the Slurm reservation.

This complex setup is illustrated with another very popular Big Data analytics framework: Spark.

Big Data Analytics with Spark

The objective of this section is to compile and run on Apache Spark on top of the UL HPC platform.

Apache Spark is a large-scale data processing engine that performs in-memory computing. Spark offers bindings in Java, Scala, Python and R for building parallel applications. high-level APIs in Java, Scala, Python and R, and an optimized engine that supports general execution graphs. It also supports a rich set of higher-level tools including Spark SQL for SQL and structured data processing, MLlib for machine learning, GraphX for graph processing, and Spark Streaming.

As for Hadoop, we are first going to build Spark using Easybuild before performing some basic examples. More precisely, in this part, we will review the basic usage of Spark in two cases:

- a single conffiguration where the classical interactive wrappers (

pyspark,scalaandRwrappers) will be reviewed. - a Standalone cluster configuration - a simple cluster manager included with Spark that makes it easy to set up a cluster), where we will run the Pi estimation.

Building a more recent version of Spark with Easybuild

Spark is present as a module on the ULHPC platform yet it is a relatively old version (2.4.3).

So we are first going to install a newer version ([3.1.1(https://spark.apache.org/releases/spark-release-3-1-1.html)) using EasyBuild.

For this reason, you should first check the "Using and Building (custom) software with EasyBuild on the UL HPC platform" tutorial. As mentioned at that occasion, when you're looking for a more recent version of a given software (Spark here) than the one provided, you will typically search for the most recent version of Spark provided by Easybuild with eb -S <pattern>

As it might be tricky to guess the most appropriate version, the script scripts/suggest-easyconfigs -v <version> <pattern> is provided

Let's do that with Spark

### Have an interactive job for the build

(access)$> cd ~/tutorials/bigdata

(access)$> si -c4

# properly configure Easybuild prefix and local build environment

$ cat settings/default.sh

$ source settings/default.sh

$ eb --version

$ echo $EASYBUILD_PREFIX

Now let's check the available easyconfigs for Spark:

$ eb -S Spark

# search for an exact match

$ ./scripts/suggest-easyconfigs -v ${RESIF_VERSION_PROD} Spark

=> Searching Easyconfigs matching pattern 'Spark'

Spark-1.3.0.eb

Spark-1.4.1.eb

Spark-1.5.0.eb

Spark-1.6.0.eb

Spark-1.6.1.eb

Spark-2.0.0.eb

Spark-2.0.2.eb

Spark-2.2.0-Hadoop-2.6-Java-1.8.0_144.eb

Spark-2.2.0-Hadoop-2.6-Java-1.8.0_152.eb

Spark-2.2.0-intel-2017b-Hadoop-2.6-Java-1.8.0_152-Python-3.6.3.eb

Spark-2.3.0-Hadoop-2.7-Java-1.8.0_162.eb

Spark-2.4.0-Hadoop-2.7-Java-1.8.eb

Spark-2.4.0-foss-2018b-Python-2.7.15.eb

Spark-2.4.0-intel-2018b-Hadoop-2.7-Java-1.8-Python-3.6.6.eb

Spark-2.4.0-intel-2018b-Python-2.7.15.eb

Spark-2.4.0-intel-2018b-Python-3.6.6.eb

Spark-2.4.5-intel-2019b-Python-3.7.4-Java-1.8.eb

Spark-3.0.0-foss-2018b-Python-2.7.15.eb

Spark-3.0.0-intel-2018b-Python-2.7.15.eb

Spark-3.1.1-fosscuda-2020b.eb

Spark-3.1.1-foss-2020a-Python-3.8.2.eb

Total: 21 entries

... potential exact match for 2020b toolchain

Spark-3.1.1-fosscuda-2020b.eb

--> suggesting 'Spark-3.1.1-fosscuda-2020b.eb'

As can be seen, a GPU enabled version is proposed but won't be appropriate on Aion compute node. In that case, you'll likely want to create and adapt an existing easyconfig -- see official tutorial. While out of scope in this session, here is how you would typically proceed:

- Copy the easyconfig file locally:

eb --copy-ec Spark-3.1.1-fosscuda-2020b.eb Spark-3.1.1-foss-2020b.eb

- (eventually) Rename the file to match the target version

- Check on the website for the most up-to-date version of the software released

- Adapt the filename of the copied easyconfig to match the target version / toolchain

- Edit the content of the easyconfig

- You'll typically have to adapt the version of the dependencies (use again

scripts/suggest-easyconfigs -s dep1 dep2 [...]) and the checksum(s) of the source/patch files to match the static versions set for the target toolchain, enforce https urls etc.

You may have to repeat that process for the dependencies. And if you succeed, kindly do not forget to submitting your easyconfig as pull requests (--new-pr) to the Easybuild community.

To save some time, the appropriate easyconfigs file Spark-3.1.1-foss-2020b-Python-3.8.6.eb (and its dependency Apache Arrow) that you can use to build locally this application on top of the UL HPC Software set according to the recommended guidelines.

# If not done yet, properly configure Easybuild prefix and local build environment

$ source settings/default.sh

$ echo $EASYBUILD_PREFIX # Check the format which must be:

# <home>/.local/easybuild/<cluster>/<version>/epyc

Now you can build Spark from the provided easyconfigs -- the -r/--robot option control the robot search path for Easybuild (where to search for easyconfigs):

# Dry-run: check the matched dependencies

$ eb src/Spark-3.1.1-foss-2020b-Python-3.8.6.eb -D -r src: # <-- don't forget the trailing ':'

# only Arrow and Spark should noyt be checked

# Launch the build

$ eb src/Spark-3.1.1-foss-2020b-Python-3.8.6.eb -r src:

Installation will last ~8 minutes using a full Aion node (-c 128).

In general it is preferable to make builds within a screen session.

Once the build is completed, recall that it was installed under your homedir under ~/.local/easybuild/<cluster>/<version>/epyc when the default EASYBUILD_PREFIX target (for the sake of generality) to ~/.local/easybuild/.

So if you want to access the installed module within another job, you'll need to load the settings settings/default.sh (to correct the values of the variables EASYBUILD_PREFIX and LOCAL_MODULES and invoke mu:

$ source settings/default.sh

$ mu # shorcut for module use $LOCAL_MODULES

$ module av Spark # Must display the build version (3.1.1)

Interactive usage

Exit your reservation to reload one with the --exclusive flag to allocate an exclusive node -- it's better for big data analytics to dedicated full nodes (properly set).

Let's load the installed module:

(laptop)$ ssh aion-cluster

(access)$ si -c 128 --exclusive -t 2:00:00

$ source settings/default.sh # See above remark

$ mu # not required

$ module load devel/Spark/3.1.1

As in the GNU Parallel tutorial, let's create a list of images from the OpenImages V4 data set.

A copy of this data set is available on the ULHPC facility, under /work/projects/bigdata_sets/OpenImages_V4/.

Let's create a CSV file which contains a random selection of 1000 training files within this dataset (prefixed by a line number).

You may want to do it as follows (copy the full command):

# training set select first 10K random sort take only top 10 prefix by line number print to stdout AND in file

# ^^^^^^ ^^^^^^^^^^^^^ ^^^^^^^^ ^^^^^^^^^^^^^ ^^^^^^^^^^^^^^^^^^^^^^^^ ^^^^^^^^^^^^^^^^^^^^^^^^^^^^^^

$ find /work/projects/bigdata_sets/OpenImages_V4/train/ -print | head -n 10000 | sort -R | head -n 1000 | awk '{ print ++i","$0 }' | tee openimages_v4_filelist.csv

1,/work/projects/bigdata_sets/OpenImages_V4/train/6196380ea79283e0.jpg

2,/work/projects/bigdata_sets/OpenImages_V4/train/7f23f40740731c03.jpg

3,/work/projects/bigdata_sets/OpenImages_V4/train/dbfc1b37f45b3957.jpg

4,/work/projects/bigdata_sets/OpenImages_V4/train/f66087cdf8e172cd.jpg

5,/work/projects/bigdata_sets/OpenImages_V4/train/5efed414dd8b23d0.jpg

6,/work/projects/bigdata_sets/OpenImages_V4/train/1be054cb3021f6aa.jpg

7,/work/projects/bigdata_sets/OpenImages_V4/train/61446dee2ee9eb27.jpg

8,/work/projects/bigdata_sets/OpenImages_V4/train/dba2da75d899c3e7.jpg

9,/work/projects/bigdata_sets/OpenImages_V4/train/7ea06f092abc005e.jpg

10,/work/projects/bigdata_sets/OpenImages_V4/train/2db694eba4d4bb04.jpg

Download also another data files from Uber:

curl -o src/uber.csv https://gitlab.com/rahasak-labs/dot/-/raw/master/src/main/resources/uber.csv

Pyspark

PySpark is the Spark Python API and exposes Spark Contexts to the Python programming environment.

$> pyspark

pyspark

Python 3.8.6 (default, Sep 3 2021, 01:03:58)

[GCC 10.2.0] on linux

Type "help", "copyright", "credits" or "license" for more information.

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/__ / .__/\_,_/_/ /_/\_\ version 3.1.1

/_/

Using Python version 3.8.6 (default, Sep 3 2021 01:03:58)

Spark context Web UI available at http://aion-84.aion-cluster.uni.lux:4040

Spark context available as 'sc' (master = local[*], app id = local-1637268453800).

SparkSession available as 'spark'.

>>>

See this tutorial for playing with pyspark.

In particular, play with the build-in filter(), map(), and reduce() functions which are all common in functional programming.

>>> txt = sc.textFile('file:////home/users/svarrette/tutorials/bigdata/openimages_v4_filelist.csv')

>>> print(txt.count())

1000

>>> txt2 = sc.textFile('file:////home/users/svarrette/tutorials/bigdata/src/uber.csv')

>>> print(txt2.count())

652436

>>> python_lines = txt.filter(lambda line: 'python' in line.lower())

>>> print(python_lines.count())

6

>>> big_list = range(10000)

>>> rdd = sc.parallelize(big_list, 2)

>>> odds = rdd.filter(lambda x: x % 2 != 0)

>>> odds.take(5)

[1, 3, 5, 7, 9]

Scala Spark Shell

Spark includes a modified version of the Scala shell that can be used interactively.

Instead of running pyspark above, run the spark-shell command:

$> spark-shell

Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

Spark context Web UI available at http://aion-1.aion-cluster.uni.lux:4040

Spark context available as 'sc' (master = local[*], app id = local-1637272004201).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 3.1.1

/_/

Using Scala version 2.12.10 (OpenJDK 64-Bit Server VM, Java 11.0.2)

Type in expressions to have them evaluated.

Type :help for more information.

scala>

R Spark Shell

The Spark R API is still experimental. Only a subset of the R API is available -- See the SparkR Documentation. Since this tutorial does not cover R, we are not going to use it.

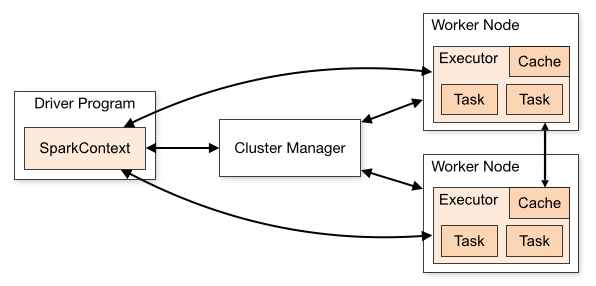

Running Spark in standalone cluster

Spark applications run as independent sets of processes on a cluster, coordinated by the SparkContext object in your main program (called the driver program).

Specifically, to run on a cluster, the SparkContext can connect to several types of cluster managers (either Spark’s own standalone cluster manager, Mesos or YARN), which allocate resources across applications. Once connected, Spark acquires executors on nodes in the cluster, which are processes that run computations and store data for your application. Next, it sends your application code (defined by JAR or Python files passed to SparkContext) to the executors. Finally, SparkContext sends tasks to the executors to run.

There are several useful things to note about this architecture:

- Each application gets its own executor processes, which stay up for the duration of the whole application and run tasks in multiple threads. This has the benefit of isolating applications from each other, on both the scheduling side (each driver schedules its own tasks) and executor side (tasks from different applications run in different JVMs). However, it also means that data cannot be shared across different Spark applications (instances of SparkContext) without writing it to an external storage system.

- Spark is agnostic to the underlying cluster manager. As long as it can acquire executor processes, and these communicate with each other, it is relatively easy to run it even on a cluster manager that also supports other applications (e.g. Mesos/YARN).

- The driver program must listen for and accept incoming connections from its executors throughout its lifetime (e.g., see spark.driver.port in the network config section). As such, the driver program must be network addressable from the worker nodes.

- Because the driver schedules tasks on the cluster, it should be run close to the worker nodes, preferably on the same local area network. If you'd like to send requests to the cluster remotely, it's better to open an RPC to the driver and have it submit operations from nearby than to run a driver far away from the worker nodes.

Cluster Manager

Spark currently supports three cluster managers:

- Standalone – a simple cluster manager included with Spark that makes it easy to set up a cluster.

- Apache Mesos – a general cluster manager that can also run Hadoop MapReduce and service applications.

- Hadoop YARN – the resource manager in Hadoop 2.

In this session, we will deploy a standalone cluster, which consists of performing the following workflow (with the objective to prepare a launcher script):

- create a master and the workers. Check the web interface of the master.

- submit a spark application to the cluster using the

spark-submitscript - Let the application run and collect the result

- stop the cluster at the end.

To facilitate these steps, Spark comes with a couple of scripts you can use to launch or stop your cluster, based on Hadoop's deploy scripts, and available in $EBROOTSPARK/sbin:

| Script | Description |

|---|---|

sbin/start-master.sh |

Starts a master instance on the machine the script is executed on. |

sbin/start-slaves.sh |

Starts a slave instance on each machine specified in the conf/slaves file. |

sbin/start-slave.sh |

Starts a slave instance on the machine the script is executed on. |

sbin/start-all.sh |

Starts both a master and a number of slaves as described above. |

sbin/stop-master.sh |

Stops the master that was started via the bin/start-master.sh script. |

sbin/stop-slaves.sh |

Stops all slave instances on the machines specified in the conf/slaves file. |

sbin/stop-all.sh |

Stops both the master and the slaves as described above. |

Yet the ULHPC team has designed a dedicated launcher script ./scripts/launcher.Spark.sh that exploits these script to quickly deploy and in a flexible way a Spark cluster over the resources allocated by slurm.

Quit your previous job - eventually detach from your screen session Ensure that you have connected by SSH to the cluster by opening an SOCKS proxy:

(laptop)$> ssh -D 1080 -C aion-cluster

Then make a new reservation across multiple full nodes:

# If not yet done, go to the appropriate directory

$ cd ~/tutorials/bigdata

# Play with -N to scale as you wish (or not) - below allocation is optimizing Aion compute nodes

# on iris: use '-N <N> --ntasks-per-node 2 -c 14'

# You'll likely need to reserve less nodes to satisfy all demands ;(

$ salloc -N 2 --ntasks-per-node 8 -c 16 --exclusive # --reservation=hpcschool

$ source settings/default.sh

$ module load devel/Spark

# Deploy an interactive Spark cluster **ACROSS** all reserved nodes

$ ./scripts/launcher.Spark.sh -i

SLURM_JOBID = 64441

SLURM_JOB_NODELIST = aion-[0003-0004]

SLURM_NNODES = 2

SLURM_NTASK = 16

Submission directory = /mnt/irisgpfs/users/svarrette/tutorials/bigdata

starting org.apache.spark.deploy.master.Master, logging to /home/users/svarrette/.spark/logs/spark-64441-org.apache.spark.deploy.master.Master-1-aion-0001.out

==========================================

============== Spark Master ==============

==========================================

url: spark://aion-0003:7077

Web UI: http://aion-0003:8082

===========================================

============ 16 Spark Workers ==============

===========================================

export SPARK_HOME=$EBROOTSPARK

export MASTER_URL=spark://aion-0003:7077

export SPARK_DAEMON_MEMORY=4096m

export SPARK_WORKER_CORES=16

export SPARK_WORKER_MEMORY=61440m

export SPARK_EXECUTOR_MEMORY=61440m

- create slave launcher script '/home/users/svarrette/.spark/worker/spark-start-slaves-64441.sh'

==========================================

*** Interactive mode ***

==========================================

Ex of submission command:

module load devel/Spark

export SPARK_HOME=$EBROOTSPARK

spark-submit \

--master spark://$(scontrol show hostname $SLURM_NODELIST | head -n 1):7077 \

--conf spark.driver.memory=${SPARK_DAEMON_MEMORY} \

--conf spark.executor.memory=${SPARK_EXECUTOR_MEMORY} \

--conf spark.python.worker.memory=${SPARK_WORKER_MEMORY} \

$SPARK_HOME/examples/src/main/python/pi.py 1000

As we are in interactive mode (-i option of the launcher script), copy/paste the export commands mentioned by the command to have them defined in your shell -- DO NOT COPY the above output but the one obtained on your side when launching the script.

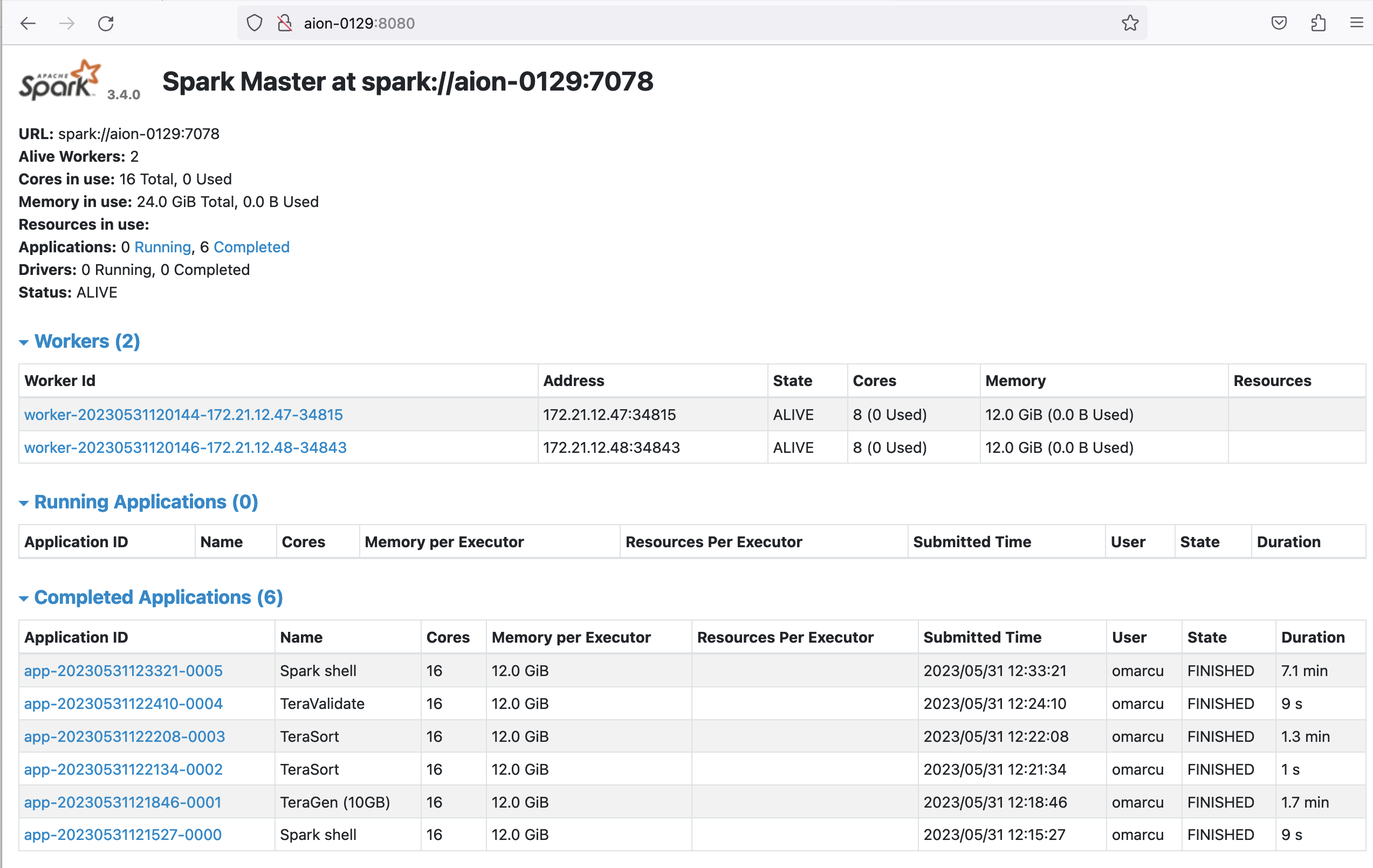

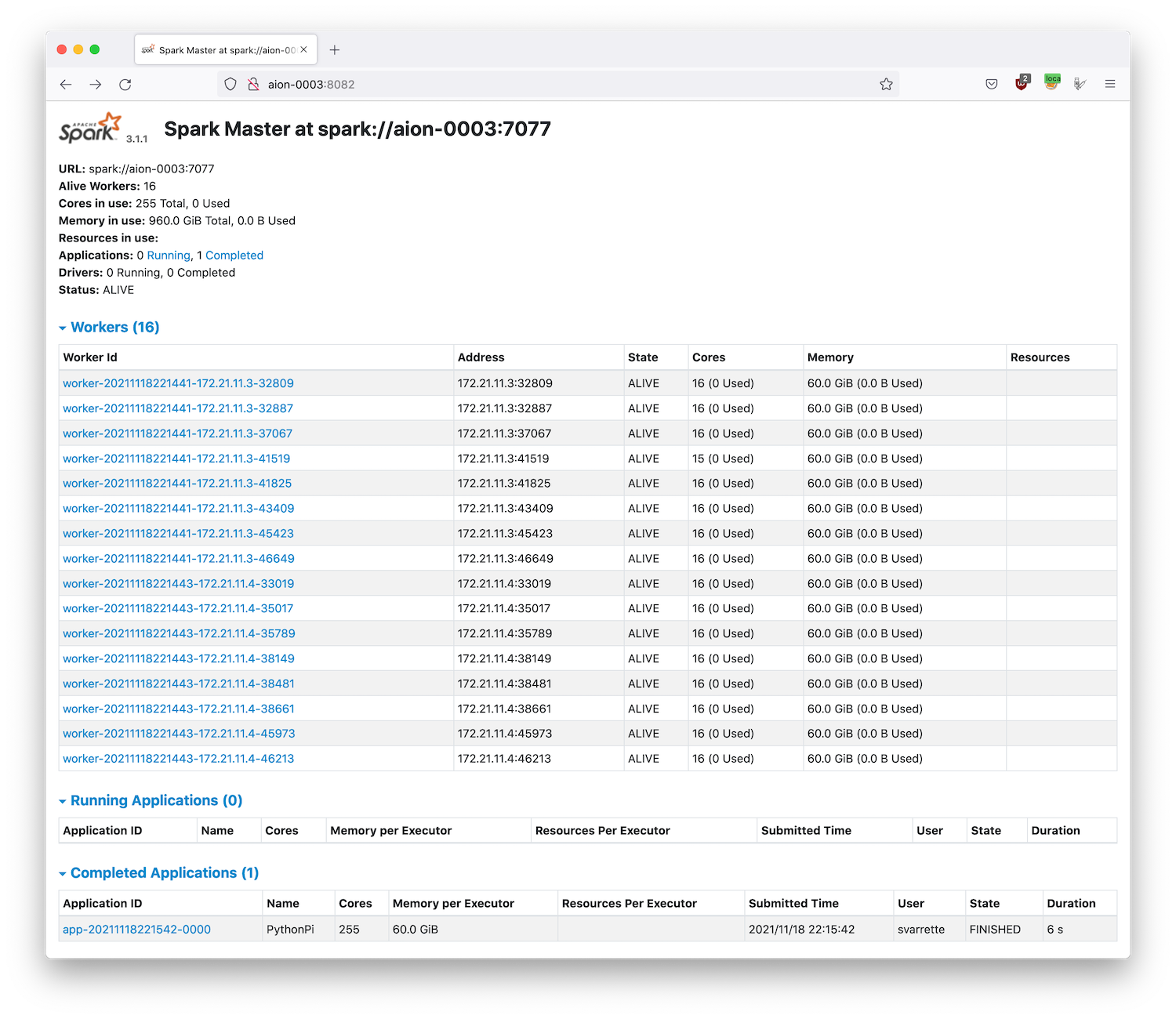

You can transparently access the Web UI (master web portal, on http://<IP>:8082) using a SOCKS 5 Proxy Approach.

Recall that this is possible as soon you have initiated an SSH connection with -D 1080 flag option to open on the local port 1080:

(laptop)$> ssh -D 1080 -C aion-cluster

Now, enable the ULHPC proxy setting from Foxy Proxy

extension (Firefox recommended) and access transparently the Web UI of the master process by entering the provided URL http://aion-<N>:8082 -- if you haven't enabled the remote DNS resolution, you will need to enter the url http://172.21.XX.YY:8082/ (adapt the IP).

It is worth to note that:

- The memory in use exceed the capacity of a single node, demonstrated if needed the scalability of the proposed setup

- The number of workers (and each of their memory) is automatically defined by the way you have request your jobs (

-N 2 --ntasks-per-node 8in this case). - Each worker is multithreaded and execute on 16 cores, except one which has 1 less core (thread) available (15) than the others -- note that this value is also automatically inherited by the slurm reservation (

-c 16in this case).- 1 core is indeed reserved for the master process.

As suggested, you can submit a Spark jobs to your freshly deployed cluster with spark-submit:

spark-submit \

--master spark://$(scontrol show hostname $SLURM_NODELIST | head -n 1):7077 \

--conf spark.driver.memory=${SPARK_DAEMON_MEMORY} \

--conf spark.executor.memory=${SPARK_EXECUTOR_MEMORY} \

--conf spark.python.worker.memory=${SPARK_WORKER_MEMORY} \

$SPARK_HOME/examples/src/main/python/pi.py 1000

And check the effect on the master portal. At the end, you should have a report of the Completed application as in the below screenshot.

When you have finished, don't forget to close your tunnel and disable FoxyProxy on your browser.

Passive jobs examples:

$> sbatch ./launcher.Spark.sh

[...]

Once finished, you can check the result of the default application submitted (in result_${SLURM_JOB_NAME}-${SLURM_JOB_ID}.out).

$> cat result_${SLURM_JOB_NAME}-${SLURM_JOB_ID}.out

Pi is roughly 3.141420

In case of problems, you can check the logs of the daemons in ~/.spark/logs/

Further Reading

You can find on the Internet many resources for expanding your HPC experience with Spark. Here are some links you might find useful to go further:

Deployment of Spark and HDFS with Singularity

This tutorial will build a Singularity container with Apache Spark, Hadoop HDFS and Java. We will deploy a Big Data cluster running Singularity through Slurm over Iris or Aion on CPU-only nodes.

Step 1: Required software

- Create a virtual machine with Ubuntu 18.04 and having Docker and Singularity installed.

- This project will leverage the following scripts:

scripts/Dockerfileand itsscripts/docker-entrypoint.sh

Step 2: Create the docker container

- Clean and create the Spark+Hadoop+Java Docker container that will be later used by Singularity

sudo docker system prune -a

sudo docker build . --tag sparkhdfs

- The Dockerfile contains steps to install Apache Spark, Hadoop and JDK11:

# Start from a base image

FROM ubuntu:18.04 AS builder

# Avoid prompts with tzdata

ENV DEBIAN_FRONTEND=noninteractive

# Set the working directory in the container

WORKDIR /usr/local

# Update Ubuntu Software repository

RUN apt-get update

RUN apt-get install -y curl unzip zip

# Install wget

RUN apt-get install -y wget

# Download Apache Hadoop

RUN wget https://downloads.apache.org/hadoop/core/hadoop-3.3.5/hadoop-3.3.5.tar.gz

RUN tar xvf hadoop-3.3.5.tar.gz

RUN mv hadoop-3.3.5 hadoop

# Download Apache Spark

RUN wget https://dlcdn.apache.org/spark/spark-3.4.0/spark-3.4.0-bin-hadoop3.tgz

RUN tar xvf spark-3.4.0-bin-hadoop3.tgz

RUN mv spark-3.4.0-bin-hadoop3 spark

# Final stage

FROM ubuntu:18.04

COPY --from=builder \

/usr/local/hadoop /opt/hadoop

# Set environment variables for Hadoop

ENV HADOOP_HOME=/opt/hadoop

ENV PATH=$PATH:$HADOOP_HOME/bin:$HADOOP_HOME/sbin

COPY --from=builder \

/usr/local/spark /opt/spark

# Set environment variables for Spark

ENV SPARK_HOME=/opt/spark

ENV PATH=$PATH:$SPARK_HOME/bin:$SPARK_HOME/sbin

# Install ssh and JDK11

RUN apt-get update && apt-get install -y openssh-server ca-certificates-java openjdk-11-jdk

ENV JAVA_HOME="/usr/lib/jvm/java-11-openjdk-amd64"

RUN ssh-keygen -t rsa -f /root/.ssh/id_rsa -q -P ""

RUN cat /root/.ssh/id_rsa.pub >> /root/.ssh/authorized_keys

RUN chmod 0600 /root/.ssh/authorized_keys

RUN echo "PermitRootLogin yes" >> /etc/ssh/sshd_config && \

echo "PubkeyAuthentication yes" >> /etc/ssh/sshd_config && \

echo "StrictHostKeyChecking no" >> /etc/ssh/ssh_config

# Copy the docker-entrypoint.sh script into the Docker image

COPY ./docker-entrypoint.sh /

# Set the entrypoint script to run when the container starts

ENTRYPOINT ["/docker-entrypoint.sh"]

# Expose the necessary ports

EXPOSE 50070 8080 7078 22 9000 8020

### DONE

- The docker-entrypoint.sh will later be used when running the Singularity container through Slurm.

#!/bin/bash

set -e

case "$1" in

sh|bash)

set -- "$@"

exec "$@"

;;

sparkMaster)

shift

echo "Running Spark Master on `hostname` ${SLURM_PROCID}"

/opt/spark/sbin/start-master.sh "$@" 1>$HOME/sparkMaster.out 2>&1 &

status=$?

if [ $status -ne 0 ]; then

echo "Failed to start Spark Master: $status"

exit $status

fi

exec tail -f $(ls -Art $HOME/sparkMaster.out | tail -n 1)

;;

sparkWorker)

shift

/opt/spark/sbin/start-worker.sh "$@" 1>$HOME/sworker-${SLURM_PROCID}.out 2>&1 &

status=$?

if [ $status -ne 0 ]; then

echo "Failed to start Spark worker: $status"

exit $status

fi

exec tail -f $(ls -Art $HOME/sworker-${SLURM_PROCID}.out | tail -n 1)

;;

sparkHDFSNamenode)

shift

echo "Running HDFS Namenode on `hostname` ${SLURM_PROCID}"

/opt/hadoop/bin/hdfs namenode -format

echo "Done format"

/opt/hadoop/bin/hdfs --daemon start namenode "$@" 1>$HOME/hdfsNamenode.out 2>&1 &

status=$?

if [ $status -ne 0 ]; then

echo "Failed to start HDFS Namenode: $status"

exit $status

fi

exec tail -f $(ls -Art $HOME/hdfsNamenode.out | tail -n 1)

;;

sparkHDFSDatanode)

shift

echo "Running HDFS datanode on `hostname` ${SLURM_PROCID}"

/opt/hadoop/bin/hdfs --daemon start datanode "$@" 1>$HOME/hdfsDatanode-${SLURM_PROCID}.out 2>&1 &

status=$?

if [ $status -ne 0 ]; then

echo "Failed to start HDFS datanode: $status"

exit $status

fi

exec tail -f $(ls -Art $HOME/hdfsDatanode-${SLURM_PROCID}.out | tail -n 1)

;;

esac

Step 3: Create the singularity container

- Either directly create the sparkhdfs.sif Singularity container, or use a sandbox to eventually modify/add before exporting to sif format. The sandbox is useful to further customize the Singularity container (e.g., modifying its docker-entry-point.sh).

#directly create a singularity container

sudo singularity build sparkhdfs.sif docker-daemon://sparkhdfs:latest

#create the sandbox directory from existing docker sparkhdfs container, then create the sparkhdfs.sif

sudo singularity build --sandbox sparkhdfs docker-daemon://sparkhdfs:latest

sudo singularity build sparkhdfs.sif sparkhdfs/

Step 4: Create a script to deploy Spark and HDFS

- The following script runSparkHDFS.sh runs singularity sparkhdfs.sif container for deploying the Spark standalone cluster (one Master and two workers) and the Hadoop HDFS Namenode and Datanodes.

- You should further customize this script (e.g., time, resources etc.)

- This script assumes that under your $HOME directory there are two subdirectories installed for spark and hadoop configuration files.

#!/bin/bash -l

#SBATCH -J SparkHDFS

#SBATCH -N 3 # Nodes

#SBATCH -n 3 # Tasks

#SBATCH --ntasks-per-node=1

#SBATCH --mem=16GB

#SBATCH -c 16 # Cores assigned to each task

#SBATCH --time=0-00:59:00

#SBATCH -p batch

#SBATCH --qos=normal

#SBATCH --mail-user=first.lastname@uni.lu

#SBATCH --mail-type=BEGIN,END

module load tools/Singularity

hostName="`hostname`"

echo "hostname=$hostName"

#save it for future job refs

myhostname="`hostname`"

rm coordinatorNode

touch coordinatorNode

cat > coordinatorNode << EOF

$myhostname

EOF

#create Spark configs

SPARK_CONF=${HOME}/spark/conf/spark-defaults.conf

cat > ${SPARK_CONF} << EOF

# Master settings

spark.master spark://$hostName:7078

# Memory settings

spark.driver.memory 2g

spark.executor.memory 12g

# Cores settings

spark.executor.cores 8

spark.cores.max 16

# Network settings

spark.driver.host $hostName

# Other settings

spark.logConf true

EOF

SPARK_ENVSH=${HOME}/spark/conf/spark-env.sh

cat > ${SPARK_ENVSH} << EOF

#!/usr/bin/env bash

SPARK_MASTER_HOST="$hostName"

SPARK_MASTER_PORT="7078"

SPARK_HOME="/opt/spark"

HADOOP_HOME="/opt/hadoop"

EOF

SPARK_L4J=${HOME}/spark/conf/log4j.properties

cat > ${SPARK_L4J} << EOF

# Set everything to be logged to the console

log4j.rootCategory=DEBUG, console

log4j.appender.console=org.apache.log4j.ConsoleAppender

log4j.appender.console.target=System.err

log4j.appender.console.layout=org.apache.log4j.PatternLayout

log4j.appender.console.layout.ConversionPattern=%d{yy/MM/dd HH:mm:ss} %p %c{1}: %m%n

# Settings to quiet third party logs that are too verbose

log4j.logger.org.spark_project.jetty=ERROR

log4j.logger.org.spark_project.jetty.util.component.AbstractLifeCycle=ERROR

log4j.logger.org.apache.spark.repl.SparkIMain$exprTyper=INFO

log4j.logger.org.apache.spark.repl.SparkILoop$SparkILoopInterpreter=INFO

EOF

### create HDFS config

HDFS_SITE=${HOME}/hadoop/etc/hadoop/hdfs-site.xml

cat > ${HDFS_SITE} << EOF

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>dfs.replication</name>

<value>1</value>

</property>

<property>

<name>dfs.namenode.name.dir</name>

<value>/tmp/hadoop/hdfs/name</value>

</property>

<property>

<name>dfs.datanode.data.dir</name>

<value>/tmp/hadoop/hdfs/data</value>

</property>

</configuration>

EOF

HDFS_CORESITE=${HOME}/hadoop/etc/hadoop/core-site.xml

cat > ${HDFS_CORESITE} << EOF

<?xml version="1.0" encoding="UTF-8"?>

<?xml-stylesheet type="text/xsl" href="configuration.xsl"?>

<configuration>

<property>

<name>fs.defaultFS</name>

<value>hdfs://$hostName:9000</value>

</property>

</configuration>

EOF

###

# Create a launcher script for SparkMaster and hdfsNamenode

#Once started, the Spark master will print out a spark://HOST:PORT to be used for submitting jobs

SPARKM_LAUNCHER=${HOME}/spark-start-master-${SLURM_JOBID}.sh

echo " - create SparkMaster and hdfsNamenode launcher script '${SPARKM_LAUNCHER}'"

cat << 'EOF' > ${SPARKM_LAUNCHER}

#!/bin/bash

echo "I am ${SLURM_PROCID} running on:"

hostname

#we are going to share an instance for Spark master and HDFS namenode

singularity instance start --bind $HOME/hadoop/logs:/opt/hadoop/logs,$HOME/hadoop/etc/hadoop:/opt/hadoop/etc/hadoop,$HOME/spark/conf:/opt/spark/conf,$HOME/spark/logs:/opt/spark/logs,$HOME/spark/work:/opt/spark/work \

sparkhdfs.sif shinst

singularity run --bind $HOME/hadoop/logs:/opt/hadoop/logs,$HOME/hadoop/etc/hadoop:/opt/hadoop/etc/hadoop instance://shinst \

sparkHDFSNamenode 2>&1 &

singularity run --bind $HOME/spark/conf:/opt/spark/conf,$HOME/spark/logs:/opt/spark/logs,$HOME/spark/work:/opt/spark/work instance://shinst \

sparkMaster

#the following example works for running without instance only the Spark Master

#singularity run --bind $HOME/spark/conf:/opt/spark/conf,$HOME/spark/logs:/opt/spark/logs,$HOME/spark/work:/opt/spark/work sparkhdfs.sif \

# sparkMaster

EOF

chmod +x ${SPARKM_LAUNCHER}

srun --exclusive -N 1 -n 1 -c 16 --ntasks-per-node=1 -l -o $HOME/SparkMaster-`hostname`.out \

${SPARKM_LAUNCHER} &

export SPARKMASTER="spark://$hostName:7078"

echo "Starting Spark workers and HDFS datanodes"

SPARK_LAUNCHER=${HOME}/spark-start-workers-${SLURM_JOBID}.sh

echo " - create Spark workers and HDFS datanodes launcher script '${SPARK_LAUNCHER}'"

cat << 'EOF' > ${SPARK_LAUNCHER}

#!/bin/bash

echo "I am ${SLURM_PROCID} running on:"

hostname

#we are going to share an instance for Spark workers and HDFS datanodes

singularity instance start --bind $HOME/hadoop/logs:/opt/hadoop/logs,$HOME/hadoop/etc/hadoop:/opt/hadoop/etc/hadoop,$HOME/spark/conf:/opt/spark/conf,$HOME/spark/logs:/opt/spark/logs,$HOME/spark/work:/opt/spark/work \

sparkhdfs.sif shinst

singularity run --bind $HOME/hadoop/logs:/opt/hadoop/logs,$HOME/hadoop/etc/hadoop:/opt/hadoop/etc/hadoop instance://shinst \

sparkHDFSDatanode 2>&1 &

singularity run --bind $HOME/spark/conf:/opt/spark/conf,$HOME/spark/logs:/opt/spark/logs,$HOME/spark/work:/opt/spark/work instance://shinst \

sparkWorker $SPARKMASTER -c 8 -m 12G

#the following without instance only Spark worker

#singularity run --bind $HOME/spark/conf:/opt/spark/conf,$HOME/spark/logs:/opt/spark/logs,$HOME/spark/work:/opt/spark/work sparkhdfs.sif \

# sparkWorker $SPARKMASTER -c 8 -m 8G

EOF

chmod +x ${SPARK_LAUNCHER}

srun --exclusive -N 2 -n 2 -c 16 --ntasks-per-node=1 -l -o $HOME/SparkWorkers-`hostname`.out \

${SPARK_LAUNCHER} &

pid=$!

sleep 3600s

wait $pid

echo $HOME

echo "Ready Stopping SparkHDFS instances"

- Now you can deploy Spark and HDFS with one command. Before that, under your $HOME directory we have to install Spark and Hadoop config directories.

#Login to Iris/Aion

ssh aion-cluster

#Make sure your $HOME directory contains the required scripts and configuration files

# You have cloned the tutorials on your laptop

# From bigdata directory e.g. /Users/ocm/bdhpc/tutorials/bigdata

#Replace omarcu with your username and from your laptop rsync as follows:

bigdata % rsync --rsh='ssh -p 8022' -avzu scripts/sparkhdfs/ aion-cluster:/home/users/omarcu/

- Your home directory looks as following:

#Login to Iris/Aion

ssh aion-cluster

#Make sure your $HOME directory contains the required scripts and configuration files

create mode 100644 /home/users/omarcu/Dockerfile

create mode 100755 /home/users/omarcu/clean.sh

create mode 100755 /home/users/omarcu/docker-entrypoint.sh

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/capacity-scheduler.xml

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/configuration.xsl

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/container-executor.cfg

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/hadoop-env.cmd

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/hadoop-env.sh

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/hadoop-metrics2.properties

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/hadoop-policy.xml

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/hadoop-user-functions.sh.example

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/hdfs-rbf-site.xml

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/httpfs-env.sh

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/httpfs-log4j.properties

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/httpfs-site.xml

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/kms-acls.xml

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/kms-env.sh

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/kms-log4j.properties

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/kms-site.xml

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/log4j.properties

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/mapred-env.cmd

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/mapred-env.sh

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/mapred-queues.xml.template

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/mapred-site.xml

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/shellprofile.d/example.sh

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/ssl-client.xml.example

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/ssl-server.xml.example

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/user_ec_policies.xml.template

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/workers

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/yarn-env.cmd

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/yarn-env.sh

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/yarn-site.xml

create mode 100644 /home/users/omarcu/hadoop/etc/hadoop/yarnservice-log4j.properties

create mode 100755 /home/users/omarcu/runSparkHDFS.sh

create mode 100644 /home/users/omarcu/spark/spark-terasort-1.2-SNAPSHOT-jar-with-dependencies.jar

Step 5: How the output looks

0 [omarcu@access1 ~]$ sbatch runSparkHDFS.sh

Submitted batch job 771126

0 [omarcu@access1 ~]$ sq

# squeue -u omarcu

JOBID PARTIT QOS NAME USER NODE CPUS ST TIME TIME_LEFT PRIORITY NODELIST(REASON)

771126 batch normal SparkHDFS omarcu 3 48 R 0:02 58:58 10398 aion-[0129,0131-0132]

0 [omarcu@access1 ~]$ ls

SparkWorkers-aion-0129.out

coordinatorNode

hdfsDatanode-0.out

hdfsNamenode.out

runSparkMaster.sh

slurm-771126.out

spark-start-master-771126.sh

sparkMaster.out

sworker-0.out

SparkMaster-aion-0129.out

clean.sh

hadoop

hdfsDatanode-1.out

runSparkHDFS.sh

spark

spark-start-workers-771126.sh

sparkhdfs.sif

sworker-1.out

[omarcu@access1 ~]$ cat slurm-771126.out

hostname=aion-0129

- create SparkMaster and hdfsNamenode launcher script '/home/users/omarcu/spark-start-master-771126.sh'

Starting Spark workers and HDFS datanodes

- create Spark workers and HDFS datanodes launcher script '/home/users/omarcu/spark-start-workers-771126.sh'

0 [omarcu@access1 ~]$ cat spark-start-master-771126.sh

#!/bin/bash

echo "I am ${SLURM_PROCID} running on:"

hostname

#we are going to share an instance for Spark master and HDFS namenode

singularity instance start --bind $HOME/hadoop/logs:/opt/hadoop/logs,$HOME/hadoop/etc/hadoop:/opt/hadoop/etc/hadoop,$HOME/spark/conf:/opt/spark/conf,$HOME/spark/logs:/opt/spark/logs,$HOME/spark/work:/opt/spark/work \

sparkhdfs.sif shinst

singularity run --bind $HOME/hadoop/logs:/opt/hadoop/logs,$HOME/hadoop/etc/hadoop:/opt/hadoop/etc/hadoop instance://shinst \

sparkHDFSNamenode 2>&1 &

singularity run --bind $HOME/spark/conf:/opt/spark/conf,$HOME/spark/logs:/opt/spark/logs,$HOME/spark/work:/opt/spark/work instance://shinst \

sparkMaster

0 [omarcu@access1 ~]$ cat spark-start-workers-771126.sh

#!/bin/bash

echo "I am ${SLURM_PROCID} running on:"

hostname

#we are going to share an instance for Spark workers and HDFS datanodes

singularity instance start --bind $HOME/hadoop/logs:/opt/hadoop/logs,$HOME/hadoop/etc/hadoop:/opt/hadoop/etc/hadoop,$HOME/spark/conf:/opt/spark/conf,$HOME/spark/logs:/opt/spark/logs,$HOME/spark/work:/opt/spark/work \

sparkhdfs.sif shinst

singularity run --bind $HOME/hadoop/logs:/opt/hadoop/logs,$HOME/hadoop/etc/hadoop:/opt/hadoop/etc/hadoop instance://shinst \

sparkHDFSDatanode 2>&1 &

singularity run --bind $HOME/spark/conf:/opt/spark/conf,$HOME/spark/logs:/opt/spark/logs,$HOME/spark/work:/opt/spark/work instance://shinst \

sparkWorker $SPARKMASTER -c 8 -m 12G

0 [omarcu@access1 ~]$ less spark/logs/spark-omarcu-org.apache.spark.deploy.master.Master-1-aion-0129.out

Spark Command: /usr/lib/jvm/java-11-openjdk-amd64/bin/java -cp /opt/spark/conf/:/opt/spark/jars/* -Xmx1g org.apache.spark.deploy.master.Master --host aion-0129 --port 7078 --webui-port 8080

========================================

Using Spark's default log4j profile: org/apache/spark/log4j2-defaults.properties

23/05/31 12:01:44 INFO Master: Started daemon with process name: 74@aion-0129

23/05/31 12:01:44 INFO SignalUtils: Registering signal handler for TERM

23/05/31 12:01:44 INFO SignalUtils: Registering signal handler for HUP

23/05/31 12:01:44 INFO SignalUtils: Registering signal handler for INT

23/05/31 12:01:44 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

23/05/31 12:01:45 INFO SecurityManager: Changing view acls to: omarcu

23/05/31 12:01:45 INFO SecurityManager: Changing modify acls to: omarcu

23/05/31 12:01:45 INFO SecurityManager: Changing view acls groups to:

23/05/31 12:01:45 INFO SecurityManager: Changing modify acls groups to:

23/05/31 12:01:45 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: omarcu; groups with view permissions: EMPTY; users with modify permissions: omarcu; groups with modify permissions: EMPTY

23/05/31 12:01:45 INFO Utils: Successfully started service 'sparkMaster' on port 7078.

23/05/31 12:01:45 INFO Master: Starting Spark master at spark://aion-0129:7078

23/05/31 12:01:45 INFO Master: Running Spark version 3.4.0

23/05/31 12:01:45 INFO JettyUtils: Start Jetty 0.0.0.0:8080 for MasterUI

23/05/31 12:01:45 INFO Utils: Successfully started service 'MasterUI' on port 8080.

23/05/31 12:01:45 INFO MasterWebUI: Bound MasterWebUI to 0.0.0.0, and started at http://aion-0129:8080

23/05/31 12:01:45 INFO Master: I have been elected leader! New state: ALIVE

23/05/31 12:01:46 INFO Master: Registering worker 172.21.12.48:34843 with 8 cores, 12.0 GiB RAM

23/05/31 12:01:51 INFO Master: Registering worker 172.21.12.47:34815 with 8 cores, 12.0 GiB RAM

- Spark is running at http://aion-0129:8080 with two workers.

- Running HDFS Namenode on aion-0129/172.21.12.45:9000 with two datanodes.

Step 6: Running manually the Terasort application

-

We are going to use aion-0129 singularity shared instance to add a file to Hadoop HDFS and run our Spark application.

-

Take a shell to the singularity instance.

0 [omarcu@aion-0129 ~](771126 N/T/CN)$ module load tools/Singularity

0 [omarcu@aion-0129 ~](771126 N/T/CN)$ singularity instance list

INSTANCE NAME PID IP IMAGE

shinst 1406958 /home/users/omarcu/sparkhdfs.sif

0 [omarcu@aion-0129 ~](771126 N/T/CN)$ singularity shell instance://shinst

Singularity> bash

- Run Spark shell and quit (testing).

omarcu@aion-0129:~$ /opt/spark/bin/spark-shell --master spark://aion-0129:7078

Setting default log level to "WARN".

To adjust logging level use sc.setLogLevel(newLevel). For SparkR, use setLogLevel(newLevel).

23/05/31 12:15:26 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

Spark context Web UI available at http://aion-0129:4040

Spark context available as 'sc' (master = spark://aion-0129:7078, app id = app-20230531121527-0000).

Spark session available as 'spark'.

Welcome to

____ __

/ __/__ ___ _____/ /__

_\ \/ _ \/ _ `/ __/ '_/

/___/ .__/\_,_/_/ /_/\_\ version 3.4.0

/_/

Using Scala version 2.12.17 (OpenJDK 64-Bit Server VM, Java 11.0.19)

Type in expressions to have them evaluated.

Type :help for more information.

scala> :q

- Next, we submit our jobs - see details about our application at https://github.com/ehiggs/spark-terasort.

omarcu@aion-0129:~$ cd /opt/spark/

omarcu@aion-0129:/opt/spark$ bin/spark-submit --master spark://aion-0129:7078 --class com.github.ehiggs.spark.terasort.TeraGen /home/users/omarcu/spark/spark-terasort-1.2-SNAPSHOT-jar-with-dependencies.jar 10g hdfs://aion-0129:9000/terasort_in

23/05/31 12:18:45 INFO SparkContext: Running Spark version 3.4.0

23/05/31 12:18:45 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

23/05/31 12:18:45 INFO ResourceUtils: ==============================================================

23/05/31 12:18:45 INFO ResourceUtils: No custom resources configured for spark.driver.

23/05/31 12:18:45 INFO ResourceUtils: ==============================================================

23/05/31 12:18:45 INFO SparkContext: Submitted application: TeraGen (10GB)

23/05/31 12:18:45 INFO SparkContext: Spark configuration:

spark.app.name=TeraGen (10GB)

spark.app.startTime=1685535525515

spark.app.submitTime=1685535525437

spark.cores.max=16

spark.driver.extraJavaOptions=-Djava.net.preferIPv6Addresses=false -XX:+IgnoreUnrecognizedVMOptions --add-opens=java.base/java.lang=ALL-UNNAMED --add-opens=java.base/java.lang.invoke=ALL-UNNAMED --add-opens=java.base/java.lang.reflect=ALL-UNNAMED --add-opens=java.base/java.io=ALL-UNNAMED --add-opens=java.base/java.net=ALL-UNNAMED --add-opens=java.base/java.nio=ALL-UNNAMED --add-opens=java.base/java.util=ALL-UNNAMED --add-opens=java.base/java.util.concurrent=ALL-UNNAMED --add-opens=java.base/java.util.concurrent.atomic=ALL-UNNAMED --add-opens=java.base/sun.nio.ch=ALL-UNNAMED --add-opens=java.base/sun.nio.cs=ALL-UNNAMED --add-opens=java.base/sun.security.action=ALL-UNNAMED --add-opens=java.base/sun.util.calendar=ALL-UNNAMED --add-opens=java.security.jgss/sun.security.krb5=ALL-UNNAMED -Djdk.reflect.useDirectMethodHandle=false

spark.driver.host=aion-0129

spark.driver.memory=2g

spark.executor.cores=8

spark.executor.extraJavaOptions=-Djava.net.preferIPv6Addresses=false -XX:+IgnoreUnrecognizedVMOptions --add-opens=java.base/java.lang=ALL-UNNAMED --add-opens=java.base/java.lang.invoke=ALL-UNNAMED --add-opens=java.base/java.lang.reflect=ALL-UNNAMED --add-opens=java.base/java.io=ALL-UNNAMED --add-opens=java.base/java.net=ALL-UNNAMED --add-opens=java.base/java.nio=ALL-UNNAMED --add-opens=java.base/java.util=ALL-UNNAMED --add-opens=java.base/java.util.concurrent=ALL-UNNAMED --add-opens=java.base/java.util.concurrent.atomic=ALL-UNNAMED --add-opens=java.base/sun.nio.ch=ALL-UNNAMED --add-opens=java.base/sun.nio.cs=ALL-UNNAMED --add-opens=java.base/sun.security.action=ALL-UNNAMED --add-opens=java.base/sun.util.calendar=ALL-UNNAMED --add-opens=java.security.jgss/sun.security.krb5=ALL-UNNAMED -Djdk.reflect.useDirectMethodHandle=false

spark.executor.memory=12g

spark.jars=file:/home/users/omarcu/spark/spark-terasort-1.2-SNAPSHOT-jar-with-dependencies.jar

spark.logConf=true

spark.master=spark://aion-0129:7078

spark.submit.deployMode=client

spark.submit.pyFiles=

23/05/31 12:18:45 INFO ResourceProfile: Default ResourceProfile created, executor resources: Map(cores -> name: cores, amount: 8, script: , vendor: , memory -> name: memory, amount: 12288, script: , vendor: , offHeap -> name: offHeap, amount: 0, script: , vendor: ), task resources: Map(cpus -> name: cpus, amount: 1.0)

23/05/31 12:18:45 INFO ResourceProfile: Limiting resource is cpus at 8 tasks per executor

23/05/31 12:18:45 INFO ResourceProfileManager: Added ResourceProfile id: 0

23/05/31 12:18:45 INFO SecurityManager: Changing view acls to: omarcu

23/05/31 12:18:45 INFO SecurityManager: Changing modify acls to: omarcu

23/05/31 12:18:45 INFO SecurityManager: Changing view acls groups to:

23/05/31 12:18:45 INFO SecurityManager: Changing modify acls groups to:

23/05/31 12:18:45 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: omarcu; groups with view permissions: EMPTY; users with modify permissions: omarcu; groups with modify permissions: EMPTY

23/05/31 12:18:45 INFO Utils: Successfully started service 'sparkDriver' on port 43365.

23/05/31 12:18:45 INFO SparkEnv: Registering MapOutputTracker

23/05/31 12:18:46 INFO SparkEnv: Registering BlockManagerMaster

23/05/31 12:18:46 INFO BlockManagerMasterEndpoint: Using org.apache.spark.storage.DefaultTopologyMapper for getting topology information

23/05/31 12:18:46 INFO BlockManagerMasterEndpoint: BlockManagerMasterEndpoint up

23/05/31 12:18:46 INFO SparkEnv: Registering BlockManagerMasterHeartbeat

23/05/31 12:18:46 INFO DiskBlockManager: Created local directory at /tmp/blockmgr-f8ba2d41-2a8e-4119-8341-9cd78a1dba15

23/05/31 12:18:46 INFO MemoryStore: MemoryStore started with capacity 1048.8 MiB

23/05/31 12:18:46 INFO SparkEnv: Registering OutputCommitCoordinator

23/05/31 12:18:46 INFO JettyUtils: Start Jetty 0.0.0.0:4040 for SparkUI

23/05/31 12:18:46 INFO Utils: Successfully started service 'SparkUI' on port 4040.

23/05/31 12:18:46 INFO SparkContext: Added JAR file:/home/users/omarcu/spark/spark-terasort-1.2-SNAPSHOT-jar-with-dependencies.jar at spark://aion-0129:43365/jars/spark-terasort-1.2-SNAPSHOT-jar-with-dependencies.jar with timestamp 1685535525515

23/05/31 12:18:46 INFO StandaloneAppClient$ClientEndpoint: Connecting to master spark://aion-0129:7078...

23/05/31 12:18:46 INFO TransportClientFactory: Successfully created connection to aion-0129/172.21.12.45:7078 after 26 ms (0 ms spent in bootstraps)

23/05/31 12:18:46 INFO StandaloneSchedulerBackend: Connected to Spark cluster with app ID app-20230531121846-0001

23/05/31 12:18:46 INFO StandaloneAppClient$ClientEndpoint: Executor added: app-20230531121846-0001/0 on worker-20230531120144-172.21.12.47-34815 (172.21.12.47:34815) with 8 core(s)

23/05/31 12:18:46 INFO StandaloneSchedulerBackend: Granted executor ID app-20230531121846-0001/0 on hostPort 172.21.12.47:34815 with 8 core(s), 12.0 GiB RAM

23/05/31 12:18:46 INFO StandaloneAppClient$ClientEndpoint: Executor added: app-20230531121846-0001/1 on worker-20230531120146-172.21.12.48-34843 (172.21.12.48:34843) with 8 core(s)

23/05/31 12:18:46 INFO StandaloneSchedulerBackend: Granted executor ID app-20230531121846-0001/1 on hostPort 172.21.12.48:34843 with 8 core(s), 12.0 GiB RAM

23/05/31 12:18:46 INFO Utils: Successfully started service 'org.apache.spark.network.netty.NettyBlockTransferService' on port 44799.

23/05/31 12:18:46 INFO NettyBlockTransferService: Server created on aion-0129:44799

23/05/31 12:18:46 INFO BlockManager: Using org.apache.spark.storage.RandomBlockReplicationPolicy for block replication policy

23/05/31 12:18:46 INFO BlockManagerMaster: Registering BlockManager BlockManagerId(driver, aion-0129, 44799, None)

23/05/31 12:18:46 INFO BlockManagerMasterEndpoint: Registering block manager aion-0129:44799 with 1048.8 MiB RAM, BlockManagerId(driver, aion-0129, 44799, None)

23/05/31 12:18:46 INFO BlockManagerMaster: Registered BlockManager BlockManagerId(driver, aion-0129, 44799, None)

23/05/31 12:18:46 INFO BlockManager: Initialized BlockManager: BlockManagerId(driver, aion-0129, 44799, None)

23/05/31 12:18:46 INFO StandaloneAppClient$ClientEndpoint: Executor updated: app-20230531121846-0001/0 is now RUNNING

23/05/31 12:18:46 INFO StandaloneAppClient$ClientEndpoint: Executor updated: app-20230531121846-0001/1 is now RUNNING

23/05/31 12:18:46 INFO StandaloneSchedulerBackend: SchedulerBackend is ready for scheduling beginning after reached minRegisteredResourcesRatio: 0.0

===========================================================================

===========================================================================

Input size: 10GB

Total number of records: 100000000

Number of output partitions: 2

Number of records/output partition: 50000000

===========================================================================

===========================================================================

23/05/31 12:18:47 INFO FileOutputCommitter: File Output Committer Algorithm version is 1

23/05/31 12:18:47 INFO FileOutputCommitter: FileOutputCommitter skip cleanup _temporary folders under output directory:false, ignore cleanup failures: false

23/05/31 12:18:47 INFO SparkContext: Starting job: runJob at SparkHadoopWriter.scala:83

23/05/31 12:18:47 INFO DAGScheduler: Got job 0 (runJob at SparkHadoopWriter.scala:83) with 2 output partitions

23/05/31 12:18:47 INFO DAGScheduler: Final stage: ResultStage 0 (runJob at SparkHadoopWriter.scala:83)

23/05/31 12:18:47 INFO DAGScheduler: Parents of final stage: List()

23/05/31 12:18:47 INFO DAGScheduler: Missing parents: List()

23/05/31 12:18:47 INFO DAGScheduler: Submitting ResultStage 0 (MapPartitionsRDD[1] at mapPartitionsWithIndex at TeraGen.scala:66), which has no missing parents

23/05/31 12:18:47 INFO MemoryStore: Block broadcast_0 stored as values in memory (estimated size 101.3 KiB, free 1048.7 MiB)

23/05/31 12:18:47 INFO MemoryStore: Block broadcast_0_piece0 stored as bytes in memory (estimated size 36.2 KiB, free 1048.7 MiB)

23/05/31 12:18:47 INFO BlockManagerInfo: Added broadcast_0_piece0 in memory on aion-0129:44799 (size: 36.2 KiB, free: 1048.8 MiB)

23/05/31 12:18:47 INFO SparkContext: Created broadcast 0 from broadcast at DAGScheduler.scala:1535

23/05/31 12:18:47 INFO DAGScheduler: Submitting 2 missing tasks from ResultStage 0 (MapPartitionsRDD[1] at mapPartitionsWithIndex at TeraGen.scala:66) (first 15 tasks are for partitions Vector(0, 1))

23/05/31 12:18:47 INFO TaskSchedulerImpl: Adding task set 0.0 with 2 tasks resource profile 0

23/05/31 12:18:48 INFO StandaloneSchedulerBackend$StandaloneDriverEndpoint: Registered executor NettyRpcEndpointRef(spark-client://Executor) (172.21.12.47:34826) with ID 0, ResourceProfileId 0

23/05/31 12:18:48 INFO BlockManagerMasterEndpoint: Registering block manager 172.21.12.47:40937 with 7.0 GiB RAM, BlockManagerId(0, 172.21.12.47, 40937, None)

23/05/31 12:18:48 INFO TaskSetManager: Starting task 0.0 in stage 0.0 (TID 0) (172.21.12.47, executor 0, partition 0, PROCESS_LOCAL, 7492 bytes)

23/05/31 12:18:48 INFO TaskSetManager: Starting task 1.0 in stage 0.0 (TID 1) (172.21.12.47, executor 0, partition 1, PROCESS_LOCAL, 7492 bytes)

23/05/31 12:18:48 INFO StandaloneSchedulerBackend$StandaloneDriverEndpoint: Registered executor NettyRpcEndpointRef(spark-client://Executor) (172.21.12.48:57728) with ID 1, ResourceProfileId 0

23/05/31 12:18:48 INFO BlockManagerMasterEndpoint: Registering block manager 172.21.12.48:36261 with 7.0 GiB RAM, BlockManagerId(1, 172.21.12.48, 36261, None)

23/05/31 12:18:48 INFO BlockManagerInfo: Added broadcast_0_piece0 in memory on 172.21.12.47:40937 (size: 36.2 KiB, free: 7.0 GiB)

23/05/31 12:20:15 INFO TaskSetManager: Finished task 1.0 in stage 0.0 (TID 1) in 86967 ms on 172.21.12.47 (executor 0) (1/2)

23/05/31 12:20:15 INFO TaskSetManager: Finished task 0.0 in stage 0.0 (TID 0) in 87277 ms on 172.21.12.47 (executor 0) (2/2)

23/05/31 12:20:15 INFO TaskSchedulerImpl: Removed TaskSet 0.0, whose tasks have all completed, from pool

23/05/31 12:20:15 INFO DAGScheduler: ResultStage 0 (runJob at SparkHadoopWriter.scala:83) finished in 88.278 s

23/05/31 12:20:15 INFO DAGScheduler: Job 0 is finished. Cancelling potential speculative or zombie tasks for this job

23/05/31 12:20:15 INFO TaskSchedulerImpl: Killing all running tasks in stage 0: Stage finished

23/05/31 12:20:15 INFO DAGScheduler: Job 0 finished: runJob at SparkHadoopWriter.scala:83, took 88.340332 s

23/05/31 12:20:15 INFO SparkHadoopWriter: Start to commit write Job job_202305311218463169431769019098759_0001.

23/05/31 12:20:15 INFO SparkHadoopWriter: Write Job job_202305311218463169431769019098759_0001 committed. Elapsed time: 83 ms.

23/05/31 12:20:15 INFO SparkContext: Starting job: count at TeraGen.scala:94

23/05/31 12:20:15 INFO DAGScheduler: Got job 1 (count at TeraGen.scala:94) with 2 output partitions

23/05/31 12:20:15 INFO DAGScheduler: Final stage: ResultStage 1 (count at TeraGen.scala:94)

23/05/31 12:20:15 INFO DAGScheduler: Parents of final stage: List()

23/05/31 12:20:15 INFO DAGScheduler: Missing parents: List()

23/05/31 12:20:15 INFO DAGScheduler: Submitting ResultStage 1 (MapPartitionsRDD[1] at mapPartitionsWithIndex at TeraGen.scala:66), which has no missing parents

23/05/31 12:20:15 INFO MemoryStore: Block broadcast_1 stored as values in memory (estimated size 4.0 KiB, free 1048.7 MiB)

23/05/31 12:20:15 INFO MemoryStore: Block broadcast_1_piece0 stored as bytes in memory (estimated size 2.3 KiB, free 1048.7 MiB)

23/05/31 12:20:15 INFO BlockManagerInfo: Added broadcast_1_piece0 in memory on aion-0129:44799 (size: 2.3 KiB, free: 1048.8 MiB)

23/05/31 12:20:15 INFO SparkContext: Created broadcast 1 from broadcast at DAGScheduler.scala:1535

23/05/31 12:20:15 INFO DAGScheduler: Submitting 2 missing tasks from ResultStage 1 (MapPartitionsRDD[1] at mapPartitionsWithIndex at TeraGen.scala:66) (first 15 tasks are for partitions Vector(0, 1))

23/05/31 12:20:15 INFO TaskSchedulerImpl: Adding task set 1.0 with 2 tasks resource profile 0

23/05/31 12:20:15 INFO TaskSetManager: Starting task 0.0 in stage 1.0 (TID 2) (172.21.12.48, executor 1, partition 0, PROCESS_LOCAL, 7492 bytes)

23/05/31 12:20:15 INFO TaskSetManager: Starting task 1.0 in stage 1.0 (TID 3) (172.21.12.47, executor 0, partition 1, PROCESS_LOCAL, 7492 bytes)

23/05/31 12:20:15 INFO BlockManagerInfo: Added broadcast_1_piece0 in memory on 172.21.12.47:40937 (size: 2.3 KiB, free: 7.0 GiB)

23/05/31 12:20:16 INFO BlockManagerInfo: Added broadcast_1_piece0 in memory on 172.21.12.48:36261 (size: 2.3 KiB, free: 7.0 GiB)

23/05/31 12:20:30 INFO TaskSetManager: Finished task 0.0 in stage 1.0 (TID 2) in 14657 ms on 172.21.12.48 (executor 1) (1/2)

23/05/31 12:20:30 INFO TaskSetManager: Finished task 1.0 in stage 1.0 (TID 3) in 14962 ms on 172.21.12.47 (executor 0) (2/2)

23/05/31 12:20:30 INFO TaskSchedulerImpl: Removed TaskSet 1.0, whose tasks have all completed, from pool

23/05/31 12:20:30 INFO DAGScheduler: ResultStage 1 (count at TeraGen.scala:94) finished in 14.972 s

23/05/31 12:20:30 INFO DAGScheduler: Job 1 is finished. Cancelling potential speculative or zombie tasks for this job

23/05/31 12:20:30 INFO TaskSchedulerImpl: Killing all running tasks in stage 1: Stage finished

23/05/31 12:20:30 INFO DAGScheduler: Job 1 finished: count at TeraGen.scala:94, took 14.977215 s

Number of records written: 100000000

23/05/31 12:20:30 INFO SparkContext: Invoking stop() from shutdown hook

23/05/31 12:20:30 INFO SparkContext: SparkContext is stopping with exitCode 0.

23/05/31 12:20:30 INFO SparkUI: Stopped Spark web UI at http://aion-0129:4040

23/05/31 12:20:30 INFO StandaloneSchedulerBackend: Shutting down all executors

23/05/31 12:20:30 INFO StandaloneSchedulerBackend$StandaloneDriverEndpoint: Asking each executor to shut down

23/05/31 12:20:30 INFO MapOutputTrackerMasterEndpoint: MapOutputTrackerMasterEndpoint stopped!

23/05/31 12:20:30 INFO MemoryStore: MemoryStore cleared

23/05/31 12:20:30 INFO BlockManager: BlockManager stopped

23/05/31 12:20:30 INFO BlockManagerMaster: BlockManagerMaster stopped

23/05/31 12:20:30 INFO OutputCommitCoordinator$OutputCommitCoordinatorEndpoint: OutputCommitCoordinator stopped!

23/05/31 12:20:31 INFO SparkContext: Successfully stopped SparkContext

23/05/31 12:20:31 INFO ShutdownHookManager: Shutdown hook called

23/05/31 12:20:31 INFO ShutdownHookManager: Deleting directory /tmp/spark-eca95b40-741a-4b10-860f-3a16cd2d850a

23/05/31 12:20:31 INFO ShutdownHookManager: Deleting directory /tmp/spark-fdbb3552-28c4-4abd-bc9c-eabf3a1435d3

omarcu@aion-0129:/opt/spark$ bin/spark-submit --master spark://aion-0129:7078 --class com.github.ehiggs.spark.terasort.TeraSort /home/users/omarcu/spark/spark-terasort-1.2-SNAPSHOT-jar-with-dependencies.jar hdfs://aion-0129:9000/terasort_in hdfs://aion-0129:9000/terasort_out

23/05/31 12:22:06 INFO SparkContext: Running Spark version 3.4.0

23/05/31 12:22:06 WARN NativeCodeLoader: Unable to load native-hadoop library for your platform... using builtin-java classes where applicable

23/05/31 12:22:06 INFO ResourceUtils: ==============================================================

23/05/31 12:22:06 INFO ResourceUtils: No custom resources configured for spark.driver.

23/05/31 12:22:06 INFO ResourceUtils: ==============================================================

23/05/31 12:22:06 INFO SparkContext: Submitted application: TeraSort

23/05/31 12:22:06 INFO SparkContext: Spark configuration:

spark.app.name=TeraSort

spark.app.startTime=1685535726750

spark.app.submitTime=1685535726689

spark.cores.max=16

spark.driver.extraJavaOptions=-Djava.net.preferIPv6Addresses=false -XX:+IgnoreUnrecognizedVMOptions --add-opens=java.base/java.lang=ALL-UNNAMED --add-opens=java.base/java.lang.invoke=ALL-UNNAMED --add-opens=java.base/java.lang.reflect=ALL-UNNAMED --add-opens=java.base/java.io=ALL-UNNAMED --add-opens=java.base/java.net=ALL-UNNAMED --add-opens=java.base/java.nio=ALL-UNNAMED --add-opens=java.base/java.util=ALL-UNNAMED --add-opens=java.base/java.util.concurrent=ALL-UNNAMED --add-opens=java.base/java.util.concurrent.atomic=ALL-UNNAMED --add-opens=java.base/sun.nio.ch=ALL-UNNAMED --add-opens=java.base/sun.nio.cs=ALL-UNNAMED --add-opens=java.base/sun.security.action=ALL-UNNAMED --add-opens=java.base/sun.util.calendar=ALL-UNNAMED --add-opens=java.security.jgss/sun.security.krb5=ALL-UNNAMED -Djdk.reflect.useDirectMethodHandle=false

spark.driver.host=aion-0129

spark.driver.memory=2g

spark.executor.cores=8

spark.executor.extraJavaOptions=-Djava.net.preferIPv6Addresses=false -XX:+IgnoreUnrecognizedVMOptions --add-opens=java.base/java.lang=ALL-UNNAMED --add-opens=java.base/java.lang.invoke=ALL-UNNAMED --add-opens=java.base/java.lang.reflect=ALL-UNNAMED --add-opens=java.base/java.io=ALL-UNNAMED --add-opens=java.base/java.net=ALL-UNNAMED --add-opens=java.base/java.nio=ALL-UNNAMED --add-opens=java.base/java.util=ALL-UNNAMED --add-opens=java.base/java.util.concurrent=ALL-UNNAMED --add-opens=java.base/java.util.concurrent.atomic=ALL-UNNAMED --add-opens=java.base/sun.nio.ch=ALL-UNNAMED --add-opens=java.base/sun.nio.cs=ALL-UNNAMED --add-opens=java.base/sun.security.action=ALL-UNNAMED --add-opens=java.base/sun.util.calendar=ALL-UNNAMED --add-opens=java.security.jgss/sun.security.krb5=ALL-UNNAMED -Djdk.reflect.useDirectMethodHandle=false

spark.executor.memory=12g

spark.jars=file:/home/users/omarcu/spark/spark-terasort-1.2-SNAPSHOT-jar-with-dependencies.jar

spark.logConf=true

spark.master=spark://aion-0129:7078

spark.serializer=org.apache.spark.serializer.KryoSerializer

spark.submit.deployMode=client

spark.submit.pyFiles=

23/05/31 12:22:06 INFO ResourceProfile: Default ResourceProfile created, executor resources: Map(cores -> name: cores, amount: 8, script: , vendor: , memory -> name: memory, amount: 12288, script: , vendor: , offHeap -> name: offHeap, amount: 0, script: , vendor: ), task resources: Map(cpus -> name: cpus, amount: 1.0)

23/05/31 12:22:06 INFO ResourceProfile: Limiting resource is cpus at 8 tasks per executor

23/05/31 12:22:06 INFO ResourceProfileManager: Added ResourceProfile id: 0

23/05/31 12:22:07 INFO SecurityManager: Changing view acls to: omarcu

23/05/31 12:22:07 INFO SecurityManager: Changing modify acls to: omarcu

23/05/31 12:22:07 INFO SecurityManager: Changing view acls groups to:

23/05/31 12:22:07 INFO SecurityManager: Changing modify acls groups to:

23/05/31 12:22:07 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: omarcu; groups with view permissions: EMPTY; users with modify permissions: omarcu; groups with modify permissions: EMPTY

23/05/31 12:22:07 INFO Utils: Successfully started service 'sparkDriver' on port 42767.

23/05/31 12:22:07 INFO SparkEnv: Registering MapOutputTracker

23/05/31 12:22:07 INFO SparkEnv: Registering BlockManagerMaster

23/05/31 12:22:07 INFO BlockManagerMasterEndpoint: Using org.apache.spark.storage.DefaultTopologyMapper for getting topology information

23/05/31 12:22:07 INFO BlockManagerMasterEndpoint: BlockManagerMasterEndpoint up

23/05/31 12:22:07 INFO SparkEnv: Registering BlockManagerMasterHeartbeat

23/05/31 12:22:07 INFO DiskBlockManager: Created local directory at /tmp/blockmgr-3be7f28e-d8a7-461a-9075-8dd0fd172ec8

23/05/31 12:22:07 INFO MemoryStore: MemoryStore started with capacity 1048.8 MiB

23/05/31 12:22:07 INFO SparkEnv: Registering OutputCommitCoordinator

23/05/31 12:22:07 INFO JettyUtils: Start Jetty 0.0.0.0:4040 for SparkUI

23/05/31 12:22:07 INFO Utils: Successfully started service 'SparkUI' on port 4040.

23/05/31 12:22:07 INFO SparkContext: Added JAR file:/home/users/omarcu/spark/spark-terasort-1.2-SNAPSHOT-jar-with-dependencies.jar at spark://aion-0129:42767/jars/spark-terasort-1.2-SNAPSHOT-jar-with-dependencies.jar with timestamp 1685535726750

23/05/31 12:22:07 INFO StandaloneAppClient$ClientEndpoint: Connecting to master spark://aion-0129:7078...

23/05/31 12:22:07 INFO TransportClientFactory: Successfully created connection to aion-0129/172.21.12.45:7078 after 30 ms (0 ms spent in bootstraps)

23/05/31 12:22:08 INFO StandaloneSchedulerBackend: Connected to Spark cluster with app ID app-20230531122208-0003

23/05/31 12:22:08 INFO StandaloneAppClient$ClientEndpoint: Executor added: app-20230531122208-0003/0 on worker-20230531120144-172.21.12.47-34815 (172.21.12.47:34815) with 8 core(s)

23/05/31 12:22:08 INFO StandaloneSchedulerBackend: Granted executor ID app-20230531122208-0003/0 on hostPort 172.21.12.47:34815 with 8 core(s), 12.0 GiB RAM

23/05/31 12:22:08 INFO StandaloneAppClient$ClientEndpoint: Executor added: app-20230531122208-0003/1 on worker-20230531120146-172.21.12.48-34843 (172.21.12.48:34843) with 8 core(s)

23/05/31 12:22:08 INFO StandaloneSchedulerBackend: Granted executor ID app-20230531122208-0003/1 on hostPort 172.21.12.48:34843 with 8 core(s), 12.0 GiB RAM

23/05/31 12:22:08 INFO Utils: Successfully started service 'org.apache.spark.network.netty.NettyBlockTransferService' on port 35531.

23/05/31 12:22:08 INFO NettyBlockTransferService: Server created on aion-0129:35531

23/05/31 12:22:08 INFO BlockManager: Using org.apache.spark.storage.RandomBlockReplicationPolicy for block replication policy

23/05/31 12:22:08 INFO BlockManagerMaster: Registering BlockManager BlockManagerId(driver, aion-0129, 35531, None)

23/05/31 12:22:08 INFO BlockManagerMasterEndpoint: Registering block manager aion-0129:35531 with 1048.8 MiB RAM, BlockManagerId(driver, aion-0129, 35531, None)

23/05/31 12:22:08 INFO StandaloneAppClient$ClientEndpoint: Executor updated: app-20230531122208-0003/0 is now RUNNING

23/05/31 12:22:08 INFO StandaloneAppClient$ClientEndpoint: Executor updated: app-20230531122208-0003/1 is now RUNNING

23/05/31 12:22:08 INFO BlockManagerMaster: Registered BlockManager BlockManagerId(driver, aion-0129, 35531, None)

23/05/31 12:22:08 INFO BlockManager: Initialized BlockManager: BlockManagerId(driver, aion-0129, 35531, None)

23/05/31 12:22:08 INFO StandaloneSchedulerBackend: SchedulerBackend is ready for scheduling beginning after reached minRegisteredResourcesRatio: 0.0

23/05/31 12:22:09 INFO MemoryStore: Block broadcast_0 stored as values in memory (estimated size 192.6 KiB, free 1048.6 MiB)

23/05/31 12:22:09 INFO MemoryStore: Block broadcast_0_piece0 stored as bytes in memory (estimated size 32.7 KiB, free 1048.6 MiB)

23/05/31 12:22:09 INFO BlockManagerInfo: Added broadcast_0_piece0 in memory on aion-0129:35531 (size: 32.7 KiB, free: 1048.8 MiB)

23/05/31 12:22:09 INFO SparkContext: Created broadcast 0 from newAPIHadoopFile at TeraSort.scala:60

23/05/31 12:22:09 INFO FileInputFormat: Total input files to process : 2

23/05/31 12:22:09 INFO FileOutputCommitter: File Output Committer Algorithm version is 1

23/05/31 12:22:09 INFO FileOutputCommitter: FileOutputCommitter skip cleanup _temporary folders under output directory:false, ignore cleanup failures: false

23/05/31 12:22:09 INFO SparkContext: Starting job: runJob at SparkHadoopWriter.scala:83

23/05/31 12:22:09 INFO DAGScheduler: Registering RDD 0 (newAPIHadoopFile at TeraSort.scala:60) as input to shuffle 0

23/05/31 12:22:09 INFO DAGScheduler: Got job 0 (runJob at SparkHadoopWriter.scala:83) with 76 output partitions

23/05/31 12:22:09 INFO DAGScheduler: Final stage: ResultStage 1 (runJob at SparkHadoopWriter.scala:83)

23/05/31 12:22:09 INFO DAGScheduler: Parents of final stage: List(ShuffleMapStage 0)

23/05/31 12:22:09 INFO DAGScheduler: Missing parents: List(ShuffleMapStage 0)

23/05/31 12:22:09 INFO DAGScheduler: Submitting ShuffleMapStage 0 (hdfs://aion-0129:9000/terasort_in NewHadoopRDD[0] at newAPIHadoopFile at TeraSort.scala:60), which has no missing parents

23/05/31 12:22:09 INFO MemoryStore: Block broadcast_1 stored as values in memory (estimated size 4.6 KiB, free 1048.6 MiB)

23/05/31 12:22:09 INFO MemoryStore: Block broadcast_1_piece0 stored as bytes in memory (estimated size 2.9 KiB, free 1048.6 MiB)

23/05/31 12:22:09 INFO BlockManagerInfo: Added broadcast_1_piece0 in memory on aion-0129:35531 (size: 2.9 KiB, free: 1048.8 MiB)

23/05/31 12:22:09 INFO SparkContext: Created broadcast 1 from broadcast at DAGScheduler.scala:1535

23/05/31 12:22:09 INFO DAGScheduler: Submitting 76 missing tasks from ShuffleMapStage 0 (hdfs://aion-0129:9000/terasort_in NewHadoopRDD[0] at newAPIHadoopFile at TeraSort.scala:60) (first 15 tasks are for partitions Vector(0, 1, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14))

23/05/31 12:22:09 INFO TaskSchedulerImpl: Adding task set 0.0 with 76 tasks resource profile 0

23/05/31 12:22:09 INFO StandaloneSchedulerBackend$StandaloneDriverEndpoint: Registered executor NettyRpcEndpointRef(spark-client://Executor) (172.21.12.47:39364) with ID 0, ResourceProfileId 0

23/05/31 12:22:09 INFO BlockManagerMasterEndpoint: Registering block manager 172.21.12.47:36545 with 7.0 GiB RAM, BlockManagerId(0, 172.21.12.47, 36545, None)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 0.0 in stage 0.0 (TID 0) (172.21.12.47, executor 0, partition 0, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 1.0 in stage 0.0 (TID 1) (172.21.12.47, executor 0, partition 1, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 2.0 in stage 0.0 (TID 2) (172.21.12.47, executor 0, partition 2, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 3.0 in stage 0.0 (TID 3) (172.21.12.47, executor 0, partition 3, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 4.0 in stage 0.0 (TID 4) (172.21.12.47, executor 0, partition 4, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 5.0 in stage 0.0 (TID 5) (172.21.12.47, executor 0, partition 5, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 6.0 in stage 0.0 (TID 6) (172.21.12.47, executor 0, partition 6, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 7.0 in stage 0.0 (TID 7) (172.21.12.47, executor 0, partition 7, ANY, 7460 bytes)

23/05/31 12:22:10 INFO StandaloneSchedulerBackend$StandaloneDriverEndpoint: Registered executor NettyRpcEndpointRef(spark-client://Executor) (172.21.12.48:59970) with ID 1, ResourceProfileId 0

23/05/31 12:22:10 INFO BlockManagerMasterEndpoint: Registering block manager 172.21.12.48:38673 with 7.0 GiB RAM, BlockManagerId(1, 172.21.12.48, 38673, None)

23/05/31 12:22:10 INFO BlockManagerInfo: Added broadcast_1_piece0 in memory on 172.21.12.47:36545 (size: 2.9 KiB, free: 7.0 GiB)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 8.0 in stage 0.0 (TID 8) (172.21.12.48, executor 1, partition 8, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 9.0 in stage 0.0 (TID 9) (172.21.12.48, executor 1, partition 9, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 10.0 in stage 0.0 (TID 10) (172.21.12.48, executor 1, partition 10, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 11.0 in stage 0.0 (TID 11) (172.21.12.48, executor 1, partition 11, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 12.0 in stage 0.0 (TID 12) (172.21.12.48, executor 1, partition 12, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 13.0 in stage 0.0 (TID 13) (172.21.12.48, executor 1, partition 13, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 14.0 in stage 0.0 (TID 14) (172.21.12.48, executor 1, partition 14, ANY, 7460 bytes)

23/05/31 12:22:10 INFO TaskSetManager: Starting task 15.0 in stage 0.0 (TID 15) (172.21.12.48, executor 1, partition 15, ANY, 7460 bytes)

23/05/31 12:22:10 INFO BlockManagerInfo: Added broadcast_1_piece0 in memory on 172.21.12.48:38673 (size: 2.9 KiB, free: 7.0 GiB)